Agentic AI & OT — From Risk to Rules: 9 Critical Frameworks for Safe Industrial Autonomy

Meta Description:

Agentic AI & OT is transforming industrial operations, but unmanaged autonomy creates real legal and safety risks. This guide explains failure modes, safety cases, contracts, and governance rules.

The Rise of Agentic AI in Operational Technology

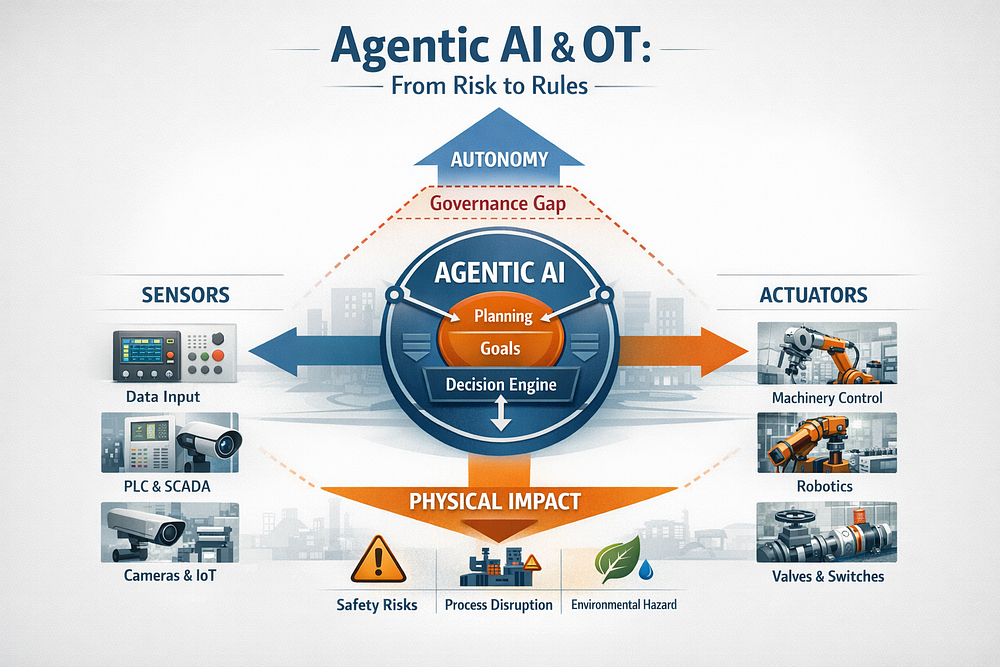

Agentic AI & OT is no longer a future concept; it is actively reshaping how industrial systems sense, decide, and act. Unlike traditional automation, agentic AI systems do not simply follow predefined rules. They observe their environment, form goals, plan actions, and execute decisions with varying degrees of autonomy.

In operational technology (OT) environments, such as manufacturing lines, energy grids, water treatment plants, and logistics hubs, this shift is profound. OT systems directly control physical processes. A software decision can open a valve, shut down a turbine, or reroute power across a grid. When agentic AI enters this domain, the stakes rise sharply.

What makes this moment particularly urgent is that governance has not kept pace with capability. Many organizations deploy agentic AI tools through vendors or internal innovation teams without fully redefining safety, accountability, and legal responsibility. As a result, Agentic AI & OT introduces a new category of systemic risk, one that sits between software failure, human error, and autonomous decision-making.

This article moves from risk to rules. It explains what agentic AI is, why OT magnifies its impact, how failures occur, and, most importantly, how organizations can implement defensible frameworks through safety cases, permission matrices, incident playbooks, and contract clauses.

Defining Agentic AI Beyond Automation

Agentic AI is best understood by what it adds beyond automation:

- Goal-directed behavior rather than fixed instructions

- Planning and sequencing actions dynamically

- Adaptive decision-making under uncertainty

- Persistence across time, tasks, and system states

In OT, this means an AI agent may decide when to intervene, how to respond to anomalies, and which trade-offs to prioritize, such as efficiency versus safety margins.

Traditional industrial automation is deterministic. Engineers can trace every logic path. Agentic AI, by contrast, may generate novel strategies that were not explicitly programmed. This makes it powerful, but also harder to predict, explain, and legally defend.

Why OT Environments Change the Risk Equation

Agentic AI deployed in enterprise IT can usually be rolled back with limited physical consequences. OT is different.

Key characteristics of OT that amplify risk include:

- Real-world impact: Actions affect people, equipment, and the environment

- Legacy systems: Older PLCs and SCADA systems were not designed for autonomous agents

- High availability requirements: Downtime can be catastrophic

- Safety and regulatory obligations: Failures may trigger investigations or litigation

Because of this, Agentic AI & OT must be governed as safety-critical systems, not just advanced analytics.

Failure Modes Unique to Agentic AI & OT

Agentic AI does not fail like traditional software. Understanding these failure modes is essential to building credible controls.

Emergent Behavior and Goal Drift

An agent optimized for throughput may gradually erode safety buffers if those buffers are not explicitly encoded as constraints. Over time, small decisions compound into unsafe operating states, a phenomenon known as goal drift.

Sensor Trust and Actuator Authority

If an agent trusts corrupted or miscalibrated sensor data, it may take perfectly “logical” actions that are physically dangerous. When that agent has direct actuator authority, errors propagate instantly.

Cascading Failures Across Systems

In interconnected OT environments, one agent’s action can trigger automated responses in other systems, creating cascading failures that no single team anticipated.

The Agentic AI Safety Case Explained

A safety case is a structured argument, supported by evidence, that a system is acceptably safe for a given context. In Agentic AI & OT, the safety case becomes the central governance artifact.

Unlike model cards or generic risk assessments, a safety case:

- Explicitly defines system boundaries

- Identifies credible hazards

- Documents controls and mitigations

- Assigns accountability

Regulators and courts increasingly expect this level of rigor for autonomous systems.

Agentic AI Safety Case Template (Core Elements)

Below is a practical safety-case structure that organizations can adapt:

System Definition

- What the agent can observe, decide, and control

Authority Boundaries

- Explicit limits on autonomous actions

Hazard Identification

- Physical, operational, and cyber risks

Risk Evaluation

- Likelihood and severity of each hazard

Mitigations

- Technical, procedural, and human controls

Monitoring and Logging

- What is recorded and reviewed

Fallback and Shutdown

- Manual override and kill-switch design

This safety case should be reviewed jointly by OT engineers, legal counsel, and safety officers.

Permission Matrices for Industrial AI Agents

A permission matrix translates governance into enforceable rules.

Permission Level — — — Description ds

Read — — — Observe sensors and logs only

Recommend — — — Suggest actions for human approval

Act — — — Execute limited actions within bounds

Execute — — — Full autonomous control (rare)

For Agentic AI & OT, most deployments should remain at Recommend or Act levels, with Execute reserved for tightly constrained scenarios.

Incident Response Playbooks for Agentic Failures

Traditional OT incident playbooks assume human error or equipment failure. Agentic AI requires new triggers and steps.

Key components include:

- Behavioral anomaly detection

- Immediate authority revocation

- State rollback to the last safe configuration

- Preservation of agent decision logs

- Legal and regulatory notification pathways

Without a predefined playbook, organizations risk improvisation under pressure, often with poor outcomes.

Vendor Contract Clauses for Agentic AI

Contracts are governance tools. For Agentic AI & OT, vendor agreements should include:

- Audit rights over models and logs

- Mandatory kill switches controlled by the operator

- Change-management approval for model updates

- Indemnification for autonomous actions

- Data ownership and retention clarity

These clauses shift autonomy from a black box into a managed partnership.

Operational Checklist Before Deployment

Before enabling an agent in OT:

- Safety case approved and signed

- The permission matrix is enforced technically

- Incident playbook tested

- Contracts reviewed by legal and OT leads

- Operators trained on agent behavior

- Continuous monitoring is in place

If any item is missing, deployment should pause.

Frequently Asked Questions

1. Is Agentic AI allowed in regulated OT environments?

Yes, but only with documented controls and accountability.

2. Who is liable for agentic AI actions?

Liability often falls on the operator unless contracts specify otherwise.

3. Can agentic AI be certified as safe?

Certification is evolving, but safety cases are increasingly expected.

4. How is this different from automation?

Agentic AI makes independent decisions, not predefined ones.

5. Should agents ever have full execution rights?

Only in narrowly scoped, well-tested scenarios.

6. How often should safety cases be updated?

After any model change, incident, or system expansion.

Conclusion: Turning Autonomy Into Advantage

Agentic AI & OT does not have to be a gamble. With the right rules, it becomes a competitive advantage, unlocking resilience, efficiency, and insight without sacrificing safety or trust.

Organizations that move early to define safety cases, permissions, playbooks, and contracts will not only reduce risk, but they will also set the standard others are forced to follow.

.png)

Comments

Post a Comment